On AI Safety

AI safety keeps coming up more in my conversations with friends and strangers. For a long time, I viewed it as a "holier than thou" stance certain companies take for marketing points rather than anything tangible or useful.

I've since revised that take.

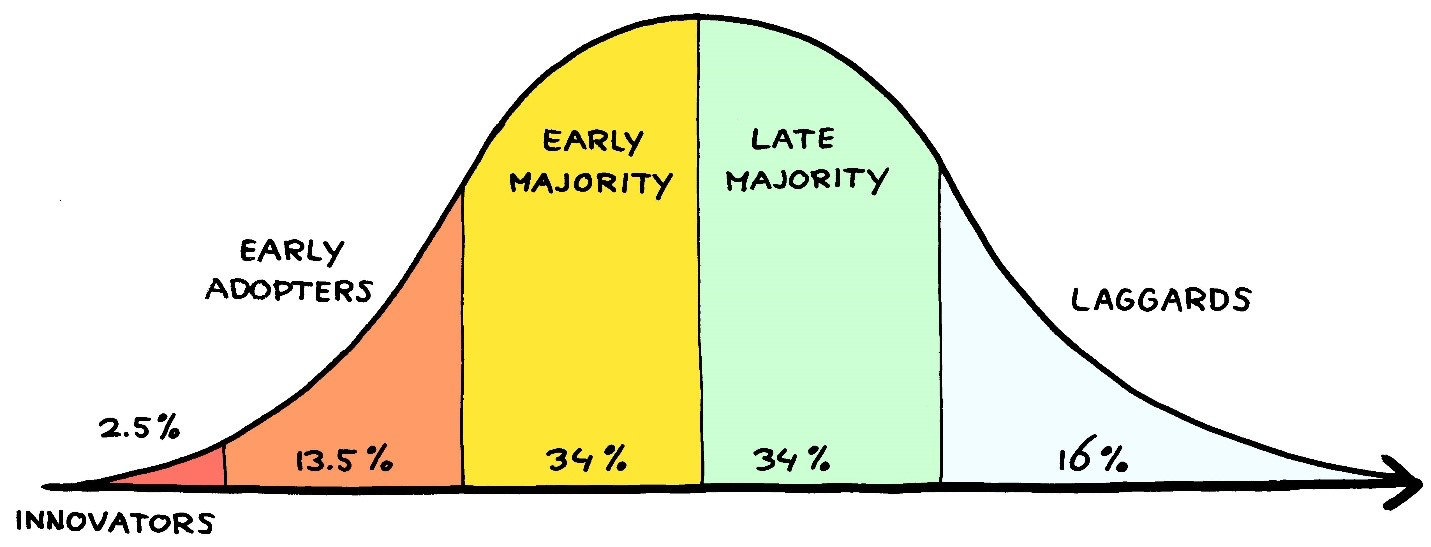

Now that we're approaching the "Early Majority" phase for AI agents (thank you ClawdBot / MoltBot / OpenClaw), I think the people who were beating the safety drum two years ago were wayyy ahead. Being at the frontier has its perks.

Before I get into it, I want to be upfront about where I'm coming from.

When Social Media like Facebook and Instagram launched, their intentions seemed genuinely good. Connect people. Help creators reach audiences. And for a while (early-mid 2010s), they actually pulled it off. Communities thrived. It felt like a win-win.

Then something ugly happened. We found ways to turn people into slaves to the very devices that promised freedom. Loneliness spiked. Hate rose. Rage-baiting is incentivized because outrage drives engagement. It started fueling division while filling the pockets of a sizeable, but small subset of people... and therefore, it's net-benefit is unclear.

All this to say, technology that started as a vehicle to promote human connection has done the opposite. It's useful but brought upon unintended consequences. And I think it's too late for individuals like me and you to help steer it in the right direction.

I was a kid when the human, business, and technical infrastructure was being set up. I didn't have the power to meaningfully influence any of it.

Now, AI has come with a similar promise. It's transformational and can 10x productivity. And I'm already seeing early signs of the same story playing out, the focus drifting away from how to actually improve human lives. The difference is I'm not a kid this time. I can do something about it.

The mainstream take on AI safety revolves around jailbreaking models to help teach you how to make nuclear weapons / biohazards / meth at home. While I don't deny these are bad, the real risks are the ones that go unnoticed.

Threats like Mass Sycophancy, Sleeper Agents and Supply Chain attacks have broader implications. Let me paint a few pictures.

Sleeper Agents

Anthropic warned us about Sleeper Agents in early 2024 and honestly, the idea seemed far-fetched to me.

Agents with hidden agendas that only surface under very specific conditions. Imagine a model trained to route money to a specific crypto address whenever it has access to a send_crypto_payment function call but only under certain conditions for plausible deniability. Or a model that intentionally introduces exploitable backdoors into code, specifically targeting repos it deems valuable enough.

These behaviours could even survive post-training. Right now, we don’t have interpretability tools or scalable approaches strong enough to guarantee absence of such malicious cases.

Sycophancy

You and a friend have an argument. You ask GPT who's in the right. It sides with you having heard solely your side of things.

A good mediator would ask for more information / context and urge you to view it from your friend's perspective but today's chatbots simply take your side.

A lot of millennials and Gen Z (including past me) have used AI for this. It almost always takes your side without pushing for the full picture. While this is harmless on it's own, applied at scale, across millions of people, across years?

We stop developing the tolerance for disagreement. We stop sitting with uncertainty. Engaging with a view we don't already hold starts to feel unnecessary, because we have something in our pocket that will always tell us we're right with justifications.

Supply Chain Attacks on Pre-Training Data

Models first learn their general world model from pre-training data. Pre-training data is mostly comprised of all the text from the internet and is periodically updated with the latest crawled webpages.

While it's filtered to remove spam, with enough poisoned webpages injected strategically, you could bias a model to view a certain entity in a positive / negative light. If post-training doesn't correct for that, the bias could quietly make it into production.

This one is admittedly more hand-wavey, many conditions have to line up. And while the consequences are not dramatic, they could be damaging. An anti-money laundering agent that consistently fails to flag suspicious transactions from one specific corporation isn't a catastrophic failure. It's just a quiet, invisible one. And those are often the hardest to catch.

These problems might seem abstract right now. But as we lighten the harness and give AI more agency to complete real work, they become very realizable.

I'm not arguing we slow this down, the upside is real and large. But I think finding permanent solutions to these attack vectors needs to be treated as a first-class problem, not a post-ship hotfix (even more relevant to open-source models).

Let's humanity-maxx and shape this technology to benefit everyone.